Release as a jar file, release updated user guide, peer-test released products, verify code authorship. Seek code quality comments from your tutor

v1.3 Summary of Milestone

| Milestone | Minimum acceptable performance to consider as 'reached' |

|---|---|

| Contributed code to v1.3 | code merged |

| Code is RepoSense-compatible | as stated in mid-v1.3 |

| v1.3 jar file released on GitHub | as stated |

| v1.3 milestone properly wrapped up on GitHub | as stated |

| Documentation updated to match v1.3 | at least the User Guide and the README.adoc is updated |

v1.3 Project Management

Ensure your code is RepoSense-compatible,

Using RepoSense

In previous semesters we asked students to annotate all their code using special @@author tags so that we can extract each student's code for grading. This semester, we are trying out a tool called RepoSense that is expected to reduce the need for such tagging, and also make it easier for you to see (and learn from) code written by others.

1. View the current status of code authorship data:

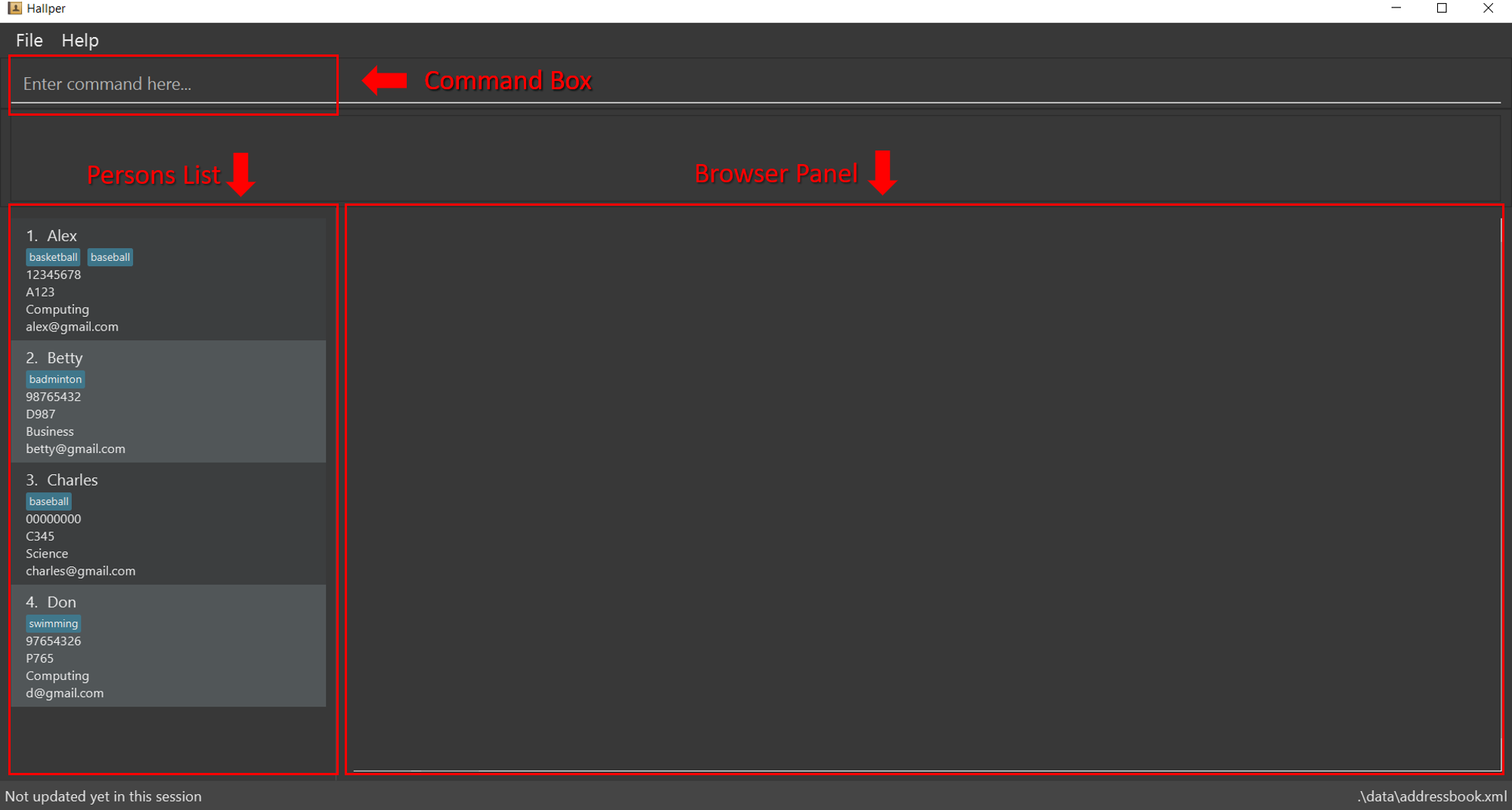

- The report generated by the tool is available at Project Code Dashboard. The feature that is most relevant to you is the Code Panel (shown on the right side of the screenshot above). It shows the code attributed to a given author. You are welcome to play around with the other features (they are still under development and will not be used for grading this semester).

- Click on your name to load the code attributed to you (based on Git blame/log data) onto the code panel on the right.

- If the code shown roughly matches the code you wrote, all is fine and there is nothing for you to do.

2. If the code does not match:

-

Here are the possible reasons for the code shown not to match the code you wrote:

- the git username in some of your commits does not match your GitHub username (perhaps you missed our instructions to set your Git username to match GitHub username earlier in the project, or GitHub did not honor your Git username for some reason)

- the actual authorship does not match the authorship determined by git blame/log e.g., another student touched your code after you wrote it, and Git log attributed the code to that student instead

-

In those cases,

- Install RepoSense (see the Getting Started section of the RepoSense User Guide)

- Use the two methods described in the RepoSense User Guide section Configuring a Repo to Provide Additional Data to RepoSense to provide additional data to the authorship analysis to make it more accurate.

- If you add a

config.jsonfile to your repo (as specified by one of the two methods),- Please use the template json file given in the module website so that your display name matches the name we expect it to be.

- If your commits have multiple author names, specify all of them e.g.,

"authorNames": ["theMyth", "theLegend", "theGary"] - Update the line

config.jsonin the.gitignorefile of your repo as/config.jsonso that it ignores theconfig.jsonproduced by the app but not the_reposense/config.json.

- If you add

@@authorannotations, please follow the guidelines below:

Adding @@author tags indicate authorship

-

Mark your code with a

//@@author {yourGithubUsername}. Note the double@.

The//@@authortag should indicates the beginning of the code you wrote. The code up to the next//@@authortag or the end of the file (whichever comes first) will be considered as was written by that author. Here is a sample code file://@@author johndoe method 1 ... method 2 ... //@@author sarahkhoo method 3 ... //@@author johndoe method 4 ... -

If you don't know who wrote the code segment below yours, you may put an empty

//@@author(i.e. no GitHub username) to indicate the end of the code segment you wrote. The author of code below yours can add the GitHub username to the empty tag later. Here is a sample code with an emptyauthortag:method 0 ... //@@author johndoe method 1 ... method 2 ... //@@author method 3 ... method 4 ... -

The author tag syntax varies based on file type e.g. for java, css, fxml. Use the corresponding comment syntax for non-Java files.

Here is an example code from an xml/fxml file.<!-- @@author sereneWong --> <textbox> <label>...</label> <input>...</input> </textbox> ... -

Do not put the

//@@authorinside java header comments.

👎/** * Returns true if ... * @@author johndoe */👍

//@@author johndoe /** * Returns true if ... */

What to and what not to annotate

-

Annotate both functional and test code There is no need to annotate documentation files.

-

Annotate only significant size code blocks that can be reviewed on its own e.g., a class, a sequence of methods, a method.

Claiming credit for code blocks smaller than a method is discouraged but allowed. If you do, do it sparingly and only claim meaningful blocks of code such as a block of statements, a loop, or an if-else statement.- If an enhancement required you to do tiny changes in many places, there is no need to annotate all those tiny changes; you can describe those changes in the Project Portfolio page instead.

- If a code block was touched by more than one person, either let the person who wrote most of it (e.g. more than 80%) take credit for the entire block, or leave it as 'unclaimed' (i.e., no author tags).

- Related to the above point, if you claim a code block as your own, more than 80% of the code in that block should have been written by yourself. For example, no more than 20% of it can be code you reused from somewhere.

- 💡 GitHub has a blame feature and a history feature that can help you determine who wrote a piece of code.

-

Do not try to boost the quantity of your contribution using unethical means such as duplicating the same code in multiple places. In particular, do not copy-paste test cases to create redundant tests. Even repetitive code blocks within test methods should be extracted out as utility methods to reduce code duplication. Individual members are responsible for making sure code attributed to them are correct. If you notice a team member claiming credit for code that he/she did not write or use other questionable tactics, you can email us (after the final submission) to let us know.

-

If you wrote a significant amount of code that was not used in the final product,

- Create a folder called

{project root}/unused - Move unused files (or copies of files containing unused code) to that folder

- use

//@@author {yourGithubUsername}-unusedto mark unused code in those files (note the suffixunused) e.g.

//@@author johndoe-unused method 1 ... method 2 ...Please put a comment in the code to explain why it was not used.

- Create a folder called

-

If you reused code from elsewhere, mark such code as

//@@author {yourGithubUsername}-reused(note the suffixreused) e.g.//@@author johndoe-reused method 1 ... method 2 ... -

You can use empty

@@authortags to mark code as not yours when RepoSense attribute the to you incorrectly.-

Code generated by the IDE/framework, should not be annotated as your own.

-

Code you modified in minor ways e.g. adding a parameter. These should not be claimed as yours but you can mention these additional contributions in the Project Portfolio page if you want to claim credit for them.

-

- After you are satisfied with the new results (i.e., results produced by running RepoSense locally), push the

config.jsonfile you added and/or the annotated code to your repo. We'll use that information the next time we run RepoSense (we run it at least once a week). - If you choose to annotate code, please annotate code chunks not smaller than a method. We do not grade code snippets smaller than a method.

- If you encounter any problem when doing the above or if you have questions, please post in the forum.

We recommend you ensure your code is RepoSense-compatible by v1.3

v1.3 Product

-

As before, move the product towards v2.0.

-

Do a

proper product release as described in the Developer Guide. Do some manual tests to ensure the jar file works.

v1.3 Documentation

v1.3 user guide should be updated to match the current version of the product. Reason: v1.3 will be subjected to a trial acceptance testing session

- README page: Update to look like a real product (rather than a project for learning SE) if you haven't done so already. In particular, update the

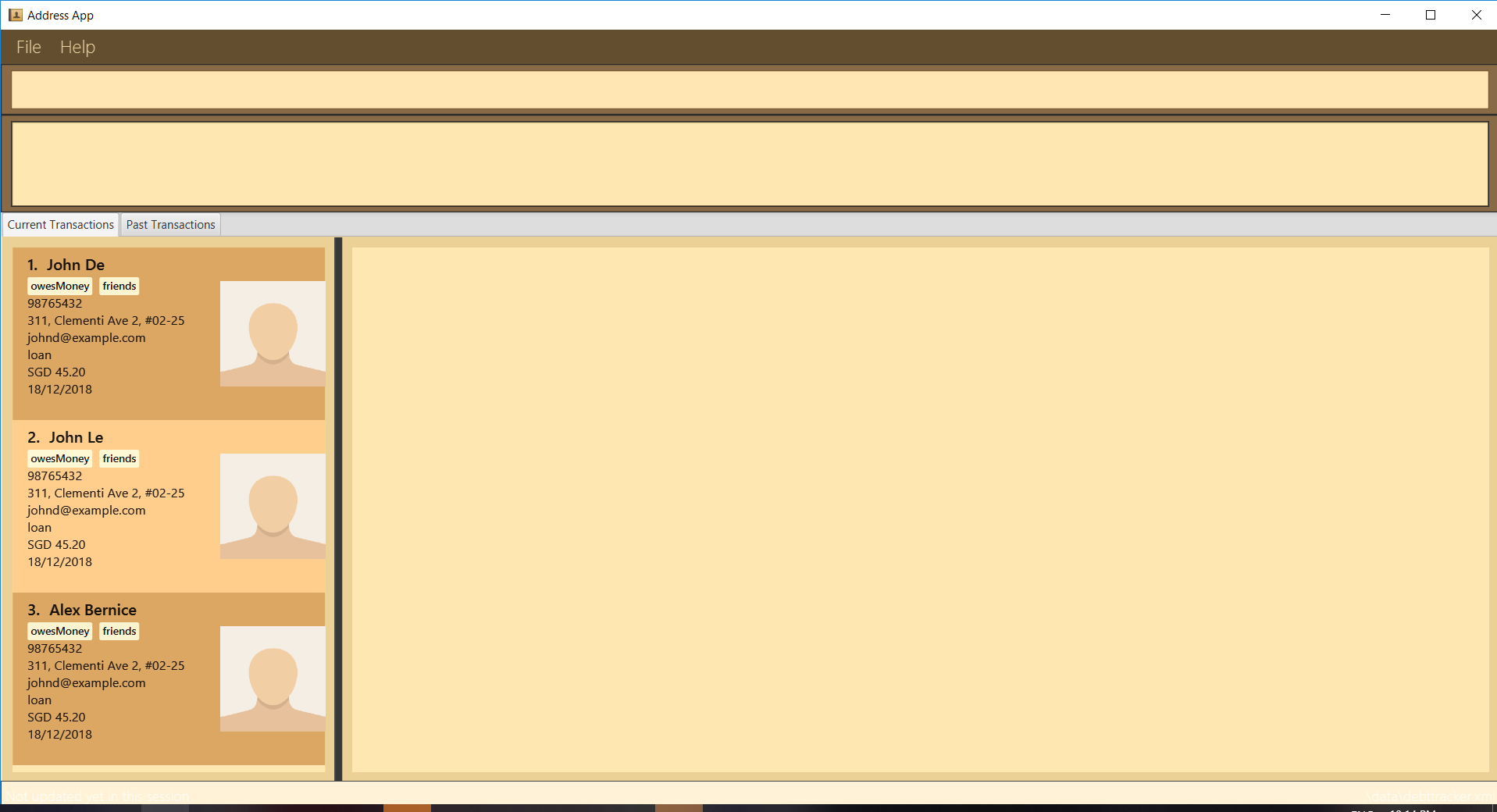

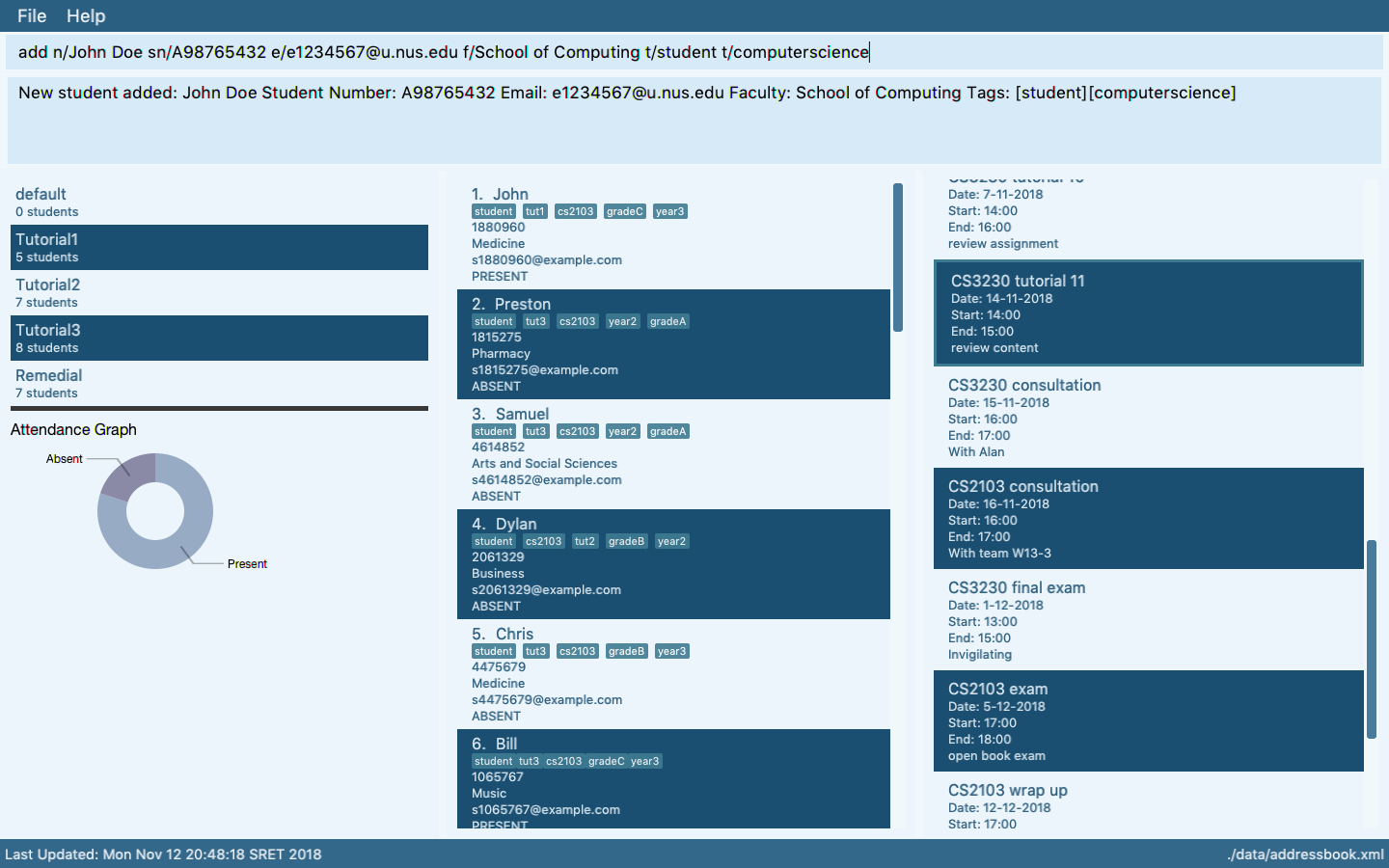

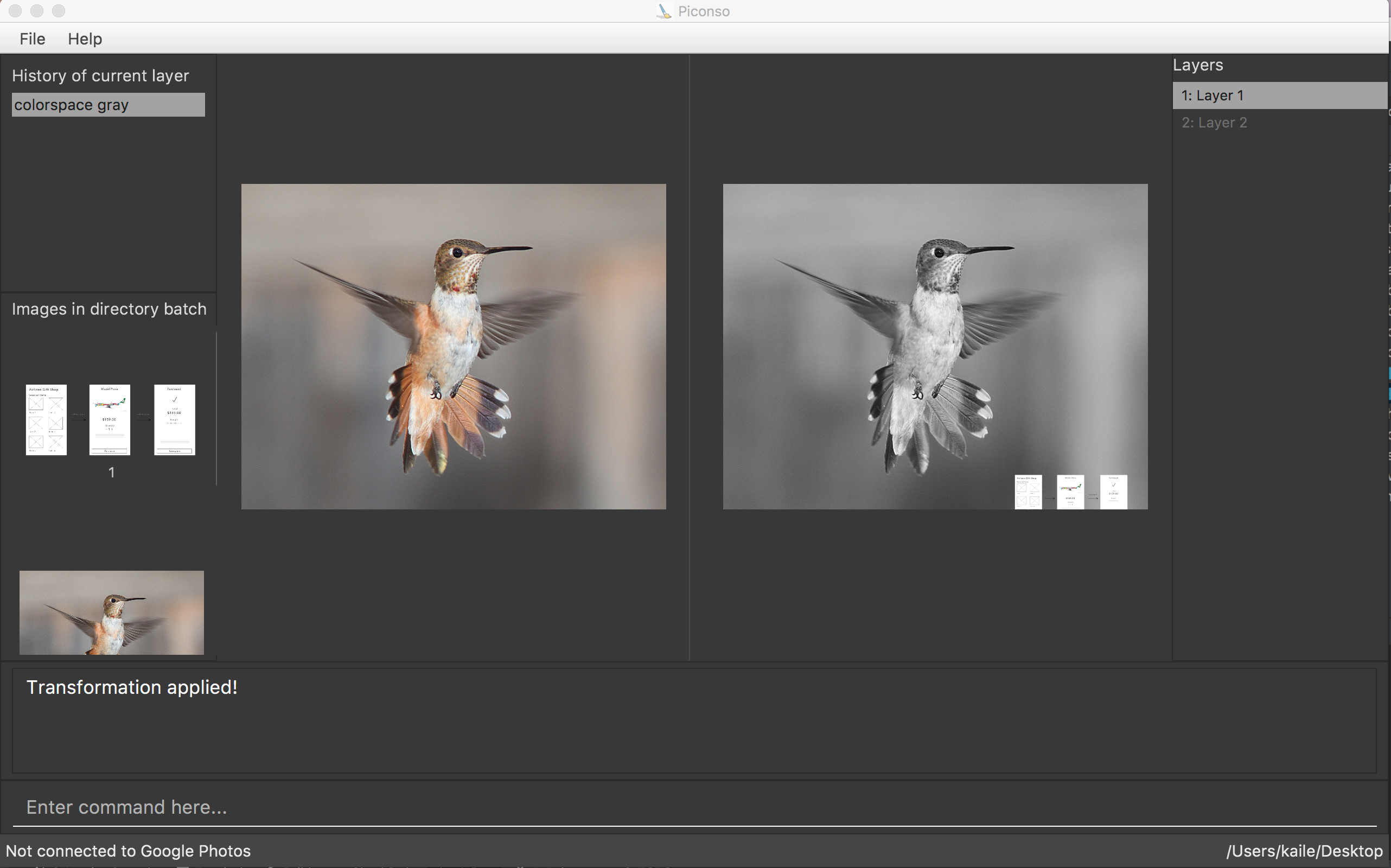

Ui.pngto match the current product (💡 tips ).

💡 Some common sense tips for a good product screenshot

Ui.png represents your product in its full glory.

- Before taking the screenshot, populate the product with data that makes the product look good. For example, if the product is supposed to show photos, use real photos instead of dummy placeholders.

- It should show a state in which the product is well-populated i.e., don't leave data panels largely blank

- Choose a state that showcase the main features of the product i.e., the login screen is not usually a good choice

- Avoid annotations (arrows, callouts, explanatory text etc.); it should look like the product is being in use for real.

-

User Guide: This document will be used by acceptance testers. Update to match the current version. In particular,

- Clearly indicate which features are not implemented yet e.g. tag those features with a

Coming in v2.0. - For those features already implemented, ensure their descriptions match the exact behavior of the product e.g. replace mockups with actual screenshots

- Clearly indicate which features are not implemented yet e.g. tag those features with a

-

Developer Guide: As before, update if necessary.

-

AboutUs page: Update to reflect current state of roles and responsibilities.

Submission: Must be included in the version tagged v1.3.

v1.3 Demo

- Do a quick demo of the main features using the jar file. Objective: demonstrate that the jar file works.

v1.3 Testing (aka Practical Exam Dry Run)

See info in the panel below:

Relevant: [

What: The v1.3 is subjected to a round of peer acceptance/system testing, also called the Practical Exam Dry Run as this round of testing will be similar to the graded

When, where: uses a 45 minute slot at the end of week 11 lecture

Objectives:

- Evaluate your manual testing skills, product evaluation skills, effort estimation skills

- Peer-evaluate your product design , implementation effort , documentation quality

When, where: Week 13 lecture

Grading:

- Your performance in the practical exam will be considered for your final grade (under the QA category and under Implementation category, about 10 marks in total).

- You will be graded based on your effectiveness as a tester (e.g., the percentage of the bugs you found, the nature of the bugs you found) and how far off your evaluation/estimates are from the evaluator consensus. Explanation: we understand that you have limited expertise in this area; hence, we penalize only if your inputs don't seem to be based on a sincere effort to test/evaluate.

- The bugs found in your product by others will affect your v1.4 marks. You will be given a chance to reject false-positive bug reports.

Preparation:

-

Ensure that you can access the relevant issue tracker given below:

-- for PE Dry Run (at v1.3): nus-cs2113-AY1819S2/pe-dry-run

-- for PE (at v1.4): nus-cs2113-AY1819S2/pe (will open only near the actual PE)- These are private repos!. If you cannot access the relevant repo, you may not have accepted the invitation to join the GitHub org used by the module. Go to https://github.com/orgs/nus-cs2113-AY1819S2/invitation to accept the invitation.

- If you cannot find the invitation, post in our forum.

-

Ensure you have access to a computer that is able to run module projects e.g. has the right Java version.

-

Have a good screen grab tool with annotation features so that you can quickly take a screenshot of a bug, annotate it, and post in the issue tracker.

- 💡 You can use Ctrl+V to paste a picture from the clipboard into a text box in GitHub issue tracker.

-

Charge your computer before coming to the PE session. The testing venue may not have enough charging points.

During:

- Take note of your team to test. It will be given to you by the teaching team (distributed via LumiNUS gradebook).

- Download from LumiNUS all files submitted by the team (i.e. jar file, User Guide, Developer Guide, and Project Portfolio Pages) into an empty folder.

- [60 minutes] Test the product and report bugs as described below:

Testing instructions for PE and PE Dry Run

-

What to test:

- PE Dry Run (at v1.3):

- Test the product based on the User Guide (the UG is most likely accessible using the

helpcommand). - Do system testing first i.e., does the product work as specified by the documentation?. If there is time left, you can do acceptance testing as well i.e., does the product solve the problem it claims to solve?.

- Test the product based on the User Guide (the UG is most likely accessible using the

- PE (at v1.4):

- Test based on the Developer Guide (Appendix named Instructions for Manual Testing) and the User Guide. The testing instructions in the Developer Guide can provide you some guidance but if you follow those instructions strictly, you are unlikely to find many bugs. You can deviate from the instructions to probe areas that are more likely to have bugs.

- As before, do both system testing and acceptance testing but give priority to system testing as system testing bugs can earn you more credit.

- PE Dry Run (at v1.3):

-

These are considered bugs:

- Behavior differs from the User Guide (or Developer Guide)

- A legitimate user behavior is not handled e.g. incorrect commands, extra parameters

- Behavior is not specified and differs from normal expectations e.g. error message does not match the error

- The feature does not solve the stated problem of the intended user i.e., the feature is 'incomplete'

- Problems in the User Guide e.g., missing/incorrect info

-

Where to report bugs: Post bug in the following issue trackers (not in the team's repo):

- PE Dry Run (at v1.3): nusCS2113-AY1819S2/pe-dry-run.

- PE (at v1.4): nusCS2113-AY1819S2/pe.

-

Bug report format:

- Post bugs as you find them (i.e., do not wait to post all bugs at the end) because the issue tracker will close exactly at the end of the allocated time.

- Do not use team ID in bug reports. Reason: to prevent others copying your bug reports

- Each bug should be a separate issue.

- Write good quality bug reports; poor quality or incorrect bug reports will not earn credit.

- Use a descriptive title.

- Give a good description of the bug with steps to reproduce and screenshots.

- Assign a severity to the bug report. Bug report without a severity label are considered

severity.Low(lower severity bugs earn lower credit).

Bug Severity labels:

severity.Low: A flaw that is unlikely to affect normal operations of the product. Appears only in very rare situations and causes a minor inconvenience only.severity.Medium: A flaw that causes occasional inconvenience to some users but they can continue to use the product.severity.High: A flaw that affects most users and causes major problems for users. i.e., makes the product almost unusable for most users.

-

About posting suggestions:

- PE Dry Run (at v1.3): You can also post suggestions on how to improve the product. 💡 Be diplomatic when reporting bugs or suggesting improvements. For example, instead of criticising the current behavior, simply suggest alternatives to consider.

- PE (at v1.4): Do not post suggestions. But if a feature is missing a critical functionality that makes the feature less useful to the intended user, it can be reported as a bug.

-

If the product doesn't work at all: If the product fails catastrophically e.g., cannot even launch, please ask for the fallback team allocated to you from the instructor.

-

[Remainder of the session] Evaluate the following aspects. Note down your evaluation in a hard copy (as a backup). Submit via TEAMMATES. You are recommended to complete this during the PE session but you have until the end of the day to submit (or revise) your submissions.

-

A. Product Design []: Evaluate the product design based on how the product V2.0 (not V1.4) is described in the User Guide.

-

unable to judge: You are unable to judge this aspect for some reason e.g., UG is not available or does not have enough information. -

target user specified and appropriate: The target user is clearly specified, prefers typing over other modes of input, and not too general (should be narrowed to a specific user group with certain characteristics). -

value specified and matching: The value offered by the product is clearly specified and matches the target user. -

value: low: The value to target user is low. App is not worth using. -

value: medium: Some small group of target users might find the app worth using. -

value: high: Most of the target users are likely to find the app worth using. -

feature-fit: low: Features don't seem to fit together. -

feature-fit: medium: Some features fit together but some don't. -

feature-fit: high: All features fit together. -

polished: The product looks well-designed.

-

-

B. Quality of user docs []: Evaluate based on the parts of the user guide written by the person, as reproduced in the project portfolio. Evaluate from an end-user perspective.

-

UG/ unable to judge: Less than 1 page worth of UG content written by the student or cannot find PPP -

UG/ good use of visuals: Uses visuals e.g., screenshots. -

UG/ good use of examples: Uses examples e.g., sample inputs/outputs. -

UG/ just enough information: Not too much information. All important information is given. -

UG/ easy to understand: The information is easy to understand for the target audience. -

UG/ polished: The document looks neat, well-formatted, and professional.

-

-

C. Quality of developer docs []: Evaluate based on the developer docs cited/reproduced in the respective project portfolio page. Evaluate from the perspective of a new developer trying to understand how the features are implemented.

-

DG/ unable to judge: Less than 0.5 pages worth of content OR other problems in the document e.g. looks like included wrong content. -

DG/ too little: 0.5 - 1 page of documentation -

DG/ types of UML diagrams: 1: Only one type of diagram used (types: Class Diagrams, Object Diagrams, Sequence Diagrams, Activity Diagrams, Use Case Diagrams) -

DG/ types of UML diagrams: 2: Two types of diagrams used -

DG/ types of UML diagrams: 3+: Three or more types of diagrams used -

DG/ UML diagrams suitable: The diagrams used for the right purpose -

DG/ UML notation correct: No more than one minor error in the UML notation -

DG/ diagrams not repetitive: Same diagram is not repeated with minor differences -

DG/ diagrams not too complicated: Diagrams don't cram too much information into them -

DG/ diagrams integrates with text: Diagrams are well integrated into the textual explanations -

DG/ easy to understand: The document is easy to understand/follow -

DG/ just enough information: Not too much information. All important information is given. -

DG/ polished: The document looks neat, well-formatted, and professional.

-

-

D. Feature Quality []: Evaluate the biggest feature done by the student for difficulty, completeness, and testability. Note: examples given below assume that AB4 did not have the commands

edit,undo, andredo.-

Feature/ difficulty: unable to judge: You are unable to judge this aspect for some reason. -

Feature/ difficulty: low: e.g. make the existing find command case insensitive. -

Feature/ difficulty: medium: e.g. an edit command that requires the user to type all fields, even the ones that are not being edited. -

Feature/ difficulty: high: e.g., undo/redo command -

Feature/ completeness: unable to judge: You are unable to judge this aspect for some reason. -

Feature/ completeness: low: A partial implementation of the feature. Barely useful. -

Feature/ completeness: medium: The feature has enough functionality to be useful for some of the users. -

Feature/ completeness: high: The feature has all functionality to be useful to almost all users. -

Feature/ not hard to test: The feature was not too hard to test manually. -

Feature/ polished: The feature looks polished (as if done by a professional programmer).

-

-

E. Amount of work []: Evaluate the amount of work, on a scale of 0 to 30.

- Consider this PR (

historycommand) as 5 units of effort which means this PR (undo/redocommand) is about 15 points of effort. Given that 30 points matches an effort twice as that needed for theundo/redofeature (which was given as an example of anAgrade project), we expect most students to be have efforts lower than 20. - Count all implementation/testing/documentation work as mentioned in that person's PPP. Also look at the actual code written by the person.

- Do not give a high value just to be nice. If your estimate is wildly inaccurate, it means you are unable to estimate the effort required to implement a feature in a project that you are supposed to know well at this point. You may lose marks if your estimate is wildly inaccurate

- Consider this PR (

-

Processing PE Bug Reports:

There will be a review period for you to respond to the bug reports you received.

Duration: The review period will start around 1 day after the PE (exact time to be announced) and will last until the following Monday midnight. However, you are recommended to finish this task ASAP, to minimize cutting into your exam preparation work.

Bug reviewing is recommended to be done as a team as some of the decisions need team consensus.

Instructions for Reviewing Bug Reports

-

First, don't freak out if there are lot of bug reports. Many can be duplicates and some can be false positives. In any case, we anticipate that all of these products will have some bugs and our penalty for bugs is not harsh. Furthermore, it depends on the severity of the bug. Some bug may not even be penalized.

-

Do not edit the subject or the description. Your response (if any) should be added as a comment.

-

You may (but not required to) close the bug report after you are done processing it, as a convenient means of separating the 'processed' issues from 'not yet processed' issues.

-

If the bug is reported multiple times, mark all copies EXCEPT one as duplicates using the

duplicatetag (if the duplicates have different severity levels, you should keep the one with the highest severity). In addition, use this technique to indicate which issue they are duplicates of. Duplicates can be omitted from processing steps given below. -

If a bug seems to be for a different product (i.e. wrongly assigned to your team), let us know (email prof).

-

Decide if it is a real bug and apply ONLY one of these labels.

Response Labels:

response.Accepted: You accept it as a bug.response.NotInScope: It is a valid issue but not something the team should be penalized for e.g., it was not related to features delivered in v1.4.response.Rejected: What tester treated as a bug is in fact the expected behavior. The may lose marks for rejecting a bug without an explanation or using an unjustifiable explanation. This penalty is higher than the penalty if the same bug was accepted. You can reject bugs that you inherited from AB4.response.CannotReproduce: You are unable to reproduce the behavior reported in the bug after multiple tries.response.IssueUnclear: The issue description is not clear. Don't post comments asked the tester to give more info. The tester will not be able to see those comments because the bug reports are anonymized.

- If applicable, decide the type of bug. Bugs without

type.*are consideredtype.FunctionalityBugby default (which are liable to a heavier penalty).

Bug Type Labels:

type.FeatureFlaw: some functionality missing from a feature delivered in v1.4 in a way that the feature becomes less useful to the intended target user for normal usage. i.e., the feature is not 'complete'. In other words, an acceptance testing bug that falls within the scope of v1.4 features. These issues are counted against the 'depth and completeness' of the feature.type.FunctionalityBug: the bug is a flaw in how the product works.type.DocTypo: A minor spelling/grammar error in the documentation. Does not affect the user.type.DocumentationBug: A flaw in the documentation that can potentially affect the user e.g., a missing step, a wrong instruction

- If you disagree with the original severity assigned to the bug, you may change it to the correct level, in which case add a comment justifying the change. All such changes will be double-checked by the teaching team. You will lose marks for unreasonable lowering of severity.

Bug Severity labels:

severity.Low: A flaw that is unlikely to affect normal operations of the product. Appears only in very rare situations and causes a minor inconvenience only.severity.Medium: A flaw that causes occasional inconvenience to some users but they can continue to use the product.severity.High: A flaw that affects most users and causes major problems for users. i.e., makes the product almost unusable for most users.

-

Decide who should fix the bug. Use the

Assigneesfield to assign the issue to that person(s). There is no need to actually fix the bug though. It's simply an indication/acceptance of responsibility. If there is no assignee, we will distribute the penalty for that bug (if any) among all team members. -

Assign owners for the rejected bugs as well. If the bug is incorrectly rejected, the penalty will apply to only the owner, if no owner is assigned, the penalty will be distributed to the team.

-

We recommend (but not enforce) that the feature owner should be assigned bugs related to the feature, even if the bug was caused indirectly by someone else. Reason: The feature owner should have defended the feature against bugs using automated tests and defensive coding techniques.

-

Add an explanatory comment explaining your choice of labels and assignees.

-

We recommend choosing

type.*,severity.*and assignee even for bugs you are not accepting. Reason: your non-acceptance may be rejected by the tutor later, in which case we need to grade it as an accepted bug.

Grading: Taking part in the PE dry run is strongly encouraged as it can affect your grade in the following ways.

- If the product you are allocated to test in the Practical Exam (at v1.4) had a very low bug count, we will consider your performance in PE dry run as well when grading the PE.

- PE dry run will help you practice for the actual PE.

- Taking part in the PE dry run will earn you participation points.

- There is no penalty for bugs reported in your product. Every bug you find is a win-win for you and the team whose product you are testing.

Objectives:

- To train you to do manual testing, bug reporting, bug

triaging, bug fixing, communicating with users/testers/developers, evaluating products etc. - To help you improve your product before the final submission.

Preparation:

-

Ensure that you can access the relevant issue tracker given below:

-- for PE Dry Run (at v1.3): nus-cs2113-AY1819S2/pe-dry-run

-- for PE (at v1.4): nus-cs2113-AY1819S2/pe (will open only near the actual PE)- These are private repos!. If you cannot access the relevant repo, you may not have accepted the invitation to join the GitHub org used by the module. Go to https://github.com/orgs/nus-cs2113-AY1819S2/invitation to accept the invitation.

- If you cannot find the invitation, post in our forum.

-

Ensure you have access to a computer that is able to run module projects e.g. has the right Java version.

-

Have a good screen grab tool with annotation features so that you can quickly take a screenshot of a bug, annotate it, and post in the issue tracker.

- 💡 You can use Ctrl+V to paste a picture from the clipboard into a text box in GitHub issue tracker.

-

Charge your computer before coming to the PE session. The testing venue may not have enough charging points.

During the session:

- Take note of your team to test. Distributed via LumiNUS gradebook.

- Download the latest jar file from the team's GitHub page. Copy it to an empty folder.

- Confirm you are testing the allocated product by comparing the product UI with the UI screenshot seen in the project dashboard.

Testing instructions for PE and PE Dry Run

-

What to test:

- PE Dry Run (at v1.3):

- Test the product based on the User Guide (the UG is most likely accessible using the

helpcommand). - Do system testing first i.e., does the product work as specified by the documentation?. If there is time left, you can do acceptance testing as well i.e., does the product solve the problem it claims to solve?.

- Test the product based on the User Guide (the UG is most likely accessible using the

- PE (at v1.4):

- Test based on the Developer Guide (Appendix named Instructions for Manual Testing) and the User Guide. The testing instructions in the Developer Guide can provide you some guidance but if you follow those instructions strictly, you are unlikely to find many bugs. You can deviate from the instructions to probe areas that are more likely to have bugs.

- As before, do both system testing and acceptance testing but give priority to system testing as system testing bugs can earn you more credit.

- PE Dry Run (at v1.3):

-

These are considered bugs:

- Behavior differs from the User Guide (or Developer Guide)

- A legitimate user behavior is not handled e.g. incorrect commands, extra parameters

- Behavior is not specified and differs from normal expectations e.g. error message does not match the error

- The feature does not solve the stated problem of the intended user i.e., the feature is 'incomplete'

- Problems in the User Guide e.g., missing/incorrect info

-

Where to report bugs: Post bug in the following issue trackers (not in the team's repo):

- PE Dry Run (at v1.3): nusCS2113-AY1819S2/pe-dry-run.

- PE (at v1.4): nusCS2113-AY1819S2/pe.

-

Bug report format:

- Post bugs as you find them (i.e., do not wait to post all bugs at the end) because the issue tracker will close exactly at the end of the allocated time.

- Do not use team ID in bug reports. Reason: to prevent others copying your bug reports

- Each bug should be a separate issue.

- Write good quality bug reports; poor quality or incorrect bug reports will not earn credit.

- Use a descriptive title.

- Give a good description of the bug with steps to reproduce and screenshots.

- Assign a severity to the bug report. Bug report without a severity label are considered

severity.Low(lower severity bugs earn lower credit).

Bug Severity labels:

severity.Low: A flaw that is unlikely to affect normal operations of the product. Appears only in very rare situations and causes a minor inconvenience only.severity.Medium: A flaw that causes occasional inconvenience to some users but they can continue to use the product.severity.High: A flaw that affects most users and causes major problems for users. i.e., makes the product almost unusable for most users.

-

About posting suggestions:

- PE Dry Run (at v1.3): You can also post suggestions on how to improve the product. 💡 Be diplomatic when reporting bugs or suggesting improvements. For example, instead of criticising the current behavior, simply suggest alternatives to consider.

- PE (at v1.4): Do not post suggestions. But if a feature is missing a critical functionality that makes the feature less useful to the intended user, it can be reported as a bug.

-

If the product doesn't work at all: If the product fails catastrophically e.g., cannot even launch, please ask for the fallback team allocated to you from the instructor.

At the end of the project each student is required to submit a Project Portfolio Page.

-

Objective:

- For you to use (e.g. in your resume) as a well-documented data point of your SE experience

- For us to use as a data point to evaluate your,

- contributions to the project

- your documentation skills

-

Sections to include:

-

Overview: A short overview of your product to provide some context to the reader.

-

Summary of Contributions:

- Code contributed: Give a link to your code on Project Code Dashboard, which should be

https://nuscs2113-ay1819s2.github.io/dashboard-beta/#=undefined&search=github_username_in_lower_case(replacegithub_username_in_lower_casewith your actual username in lower case e.g.,johndoe). This link is also available in the Project List Page -- linked to the icon under your photo. - Features implemented: A summary of the features you implemented. If you implemented multiple features, you are recommended to indicate which one is the biggest feature.

- Other contributions:

- Contributions to project management e.g., setting up project tools, managing releases, managing issue tracker etc.

- Evidence of helping others e.g. responses you posted in our forum, bugs you reported in other team's products,

- Evidence of technical leadership e.g. sharing useful information in the forum

- Code contributed: Give a link to your code on Project Code Dashboard, which should be

-

Relevant descriptions/terms/conventions: Include all relevant details necessary to understand the document, e.g., conventions, symbols or labels introduced by you, even if it was not introduced by you.

-

Contributions to the User Guide: Reproduce the parts in the User Guide that you wrote. This can include features you implemented as well as features you propose to implement.

The purpose of allowing you to include proposed features is to provide you more flexibility to show your documentation skills. e.g. you can bring in a proposed feature just to give you an opportunity to use a UML diagram type not used by the actual features. -

Contributions to the Developer Guide: Reproduce the parts in the Developer Guide that you wrote. Ensure there is enough content to evaluate your technical documentation skills and UML modelling skills. You can include descriptions of your design/implementations, possible alternatives, pros and cons of alternatives, etc.

-

If you plan to use the PPP in your Resume, you can also include your SE work outside of the module (will not be graded)

-

-

Format:

-

File name:

docs/team/githbub_username_in_lower_case.adoce.g.,docs/team/johndoe.adoc -

Follow the example in the AddressBook-Level4

-

💡 You can use the Asciidoc's

includefeature to include sections from the developer guide or the user guide in your PPP. Follow the example in the sample. -

It is assumed that all contents in the PPP were written primarily by you. If any section is written by someone else e.g. someone else wrote described the feature in the User Guide but you implemented the feature, clearly state that the section was written by someone else (e.g.

Start of Extract [from: User Guide] written by Jane Doe). Reason: Your writing skills will be evaluated based on the PPP

-

-

Page limit:

Content Limit Overview + Summary of contributions 0.5-1 (soft limit) Contributions to the User Guide 1-3 (soft limit) Contributions to the Developer Guide 3-6 (soft limit) Total 5-10 (strict) - The page limits given above are after converting to PDF format. The actual amount of content you require is actually less than what these numbers suggest because the HTML → PDF conversion adds a lot of spacing around content.

- Reason for page limit: These submissions are peer-graded (in the PE) which needs to be done in a limited time span.

If you have more content than the limit given above, you can give a representative samples of UG and DG that showcase your documentation skills. Those samples should be understandable on their own. For the parts left-out, you can give an abbreviated version and refer the reader to the full UG/DG for more details.

It's similar to giving extra details as appendices; the reader will look at the UG/DG if the PPP is not enough to make a judgment. For example, when judging documentation quality, if the part in the PPP is not well-written, there is no point reading the rest in the main UG/DG. That's why you need to put the most representative part of your writings in the PPP and still give an abbreviated version of the rest in the PPP itself. Even when judging the quantity of work, the reader should be able to get a good sense of the quantity by combining what is quoted in the PPP and your abbreviated description of the missing part. There is no guarantee that the evaluator will read the full document.

After the session:

- We'll transfer the relevant bug reports to your repo over the weekend. Once you have received the bug reports for your product, it is up to you to decide whether you will act on reported issues before the final submission v1.4. For some issues, the correct decision could be to reject or postpone to a version beyond v1.4.

- You can post in the issue thread to communicate with the tester e.g. to ask for more info, etc. However, the tester is not obliged to respond.

- 💡 Do not argue with the issue reporter to try to convince that person that your way is correct/better. If at all, you can gently explain the rationale for the current behavior but do not waste time getting involved in long arguments. If you think the suggestion/bug is unreasonable, just thank the reporter for their view and close the issue.